Internet Metrics: Latency vs Throughput

For Internet connections, there are two metrics that are relevant when measuring its performance. These are latency, which measure how quickly a data packet gets across, and throughput, which measure how much volume of data the network is able to transfer per second.

These two concepts are related but distinct. In this post, we shall understand the meaning of these two concepts in detail, and dig into their practical implications in the some practical use-cases, such as video calling or stock trading.

Table of Contents

Throughput (a.k.a bandwidth)

Throughput refers to the amount of data that can be transmitted over a network in a given time frame, typically measured in megabits per second (Mbps) or gigabits per second (Gbps). Throughput of uplink (sending) and downlink (receiving) may vary, sometimes by a lot, and this is by design by the ISPs.

A high throughput means the network is capable of sending more volume of data per second. A rule of thumb is at least 20 Mbps to watch YouTube videos at 1080p resolution. However this is not a constant thing; many factors determine the “minimum Mbps for achieving xyz resolution”, such as the compression and other methods like adaptive bitrate streaming.

Tip: megabits, megabytes, gigabytes are just ways to express large amounts, and Mb and MB mean different things!

Very often, network service providers advertise their network capabilities on the maximum throughput that it will carry for a given customer, or a given subscription plan. For instance, India’s leading telecom provider Jio has the following section in their landing page for “AirFiber” :

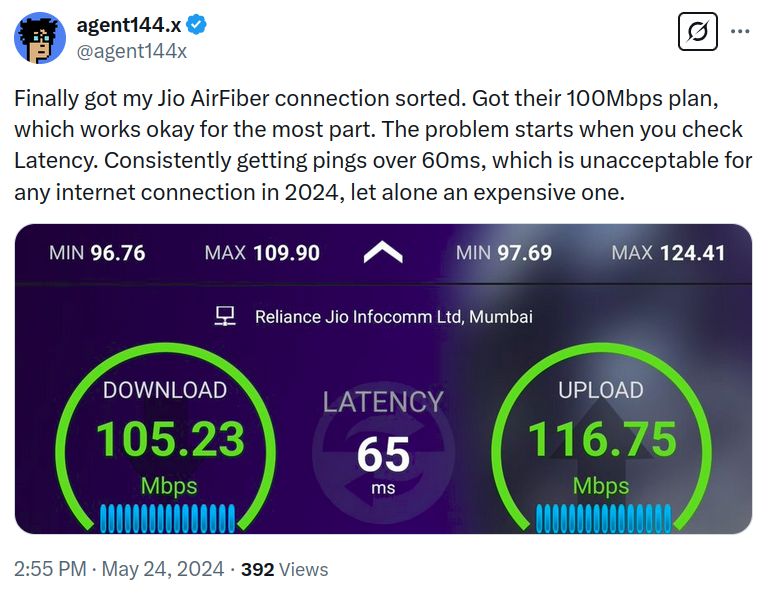

Notice how the entire page does not mention latency at all! This kind of advertisement paints a half-picture; and as a customer, if you are out of luck. If you are planning to use the network for specific applications which are latency-sensitive, advertisements might not show you the ping data, and you would need to dig around on social media:

Latency (a.k.a ping)

Latency refers to the time it takes for a data packet to travel from one point to another, often measured in milliseconds (ms). Thus, lower latency is better. When measured from a client device, such as your web browser, this number indicates how much time has elapsed since a data packet was sent to the server and a reply was received by the client. Usually latencies between up to 70 or 80 milliseconds are considered good enough.

High latency makes real-time communications difficult, and above some threshold, impossible. Common internet activities, like gaming or audio/video calling, are disturbed by high latency. This is because the applications need to update the visual or auditory device based on responses received from the other party “quickly enough”. What makes it quick enough, is that for it to be unnoticeable by the human user. High latency can result in awkward pauses, disrupted conversations, or synchronization issues, such as lip movements lagging behind speech. In online gaming, high latency can lead to lag, where actions in the game are delayed relative to the player’s input, severely affecting the gaming experience, this threshold (in milliseconds) ultimately depends on the human psychological system. In stock trading, high latency can be the cause of slippage, causing the trader to lose money.

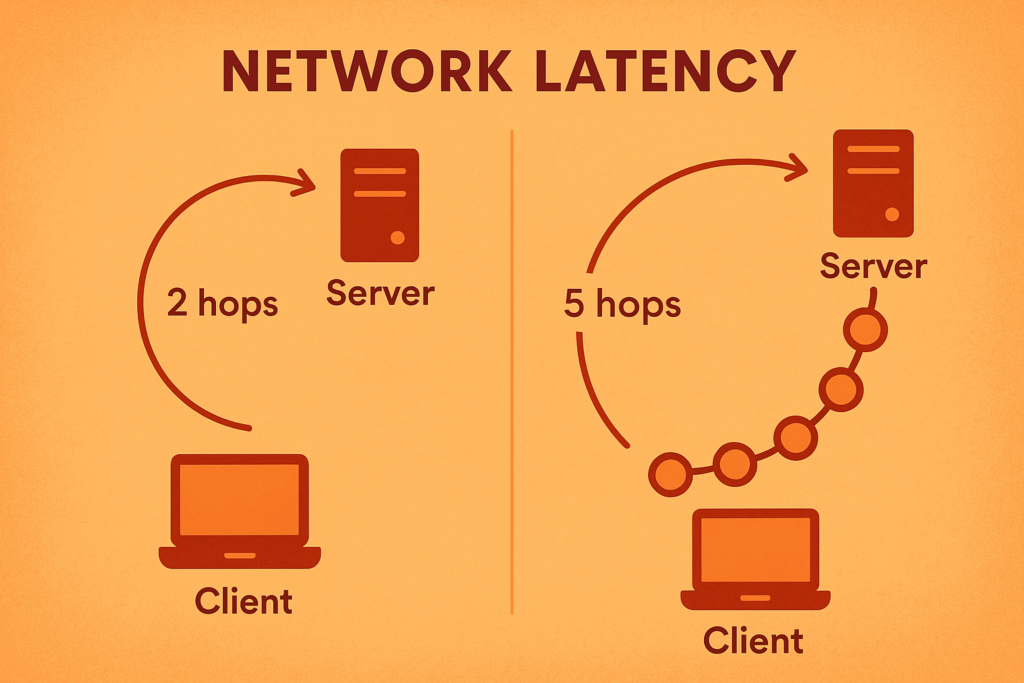

One common mistake or complication in latency measurement is choosing an irrelevant destination server. Suppose your online gaming partner is situated in city A, and your latency measurement is done via some web app that chooses a server in city B. If city A and B are far apart (not just geographically but also in terms of number of hops), then the latency measurement will be a poor proxy for the actual latency experienced by your computer. Additionally, the physical distance between nodes, congestion in the network, and issues like wireless jitter (variation in signal timing) all play their part in determining how latency and throughput manifest in real-world use cases. For these reasons, many online gaming systems provide tools to measure the actual latency between your device and the server or the peer. However, applications such as Google Meet or Zoom (which are also latency-sensitive), do not provide such features.

The network stack design plays a significant role in both latency and throughput. The network stack is the suite of protocols responsible for managing data transmission over the internet, with layers like the physical layer, the transport layer (e.g., TCP or UDP), and the application layer. The interactions between these layers, along with network routing, throttling mechanisms, and congestion control, all impact the final experience users have with their internet connections.

For example, TCP, the protocol often used for reliable communication, introduces higher latency due to its need to ensure that all packets are received correctly. It does so through a process of acknowledgement and re-transmission of lost packets, improving reliability, also adds overhead. Conversely, UDP, which is often used for applications like gaming or video streaming, sacrifices reliability for speed, meaning it introduces less latency at the cost of some data loss.

Measuring And Improving Your Speedtest Results

Just head over to fast.com or speedtest.net to measure the performance of your network at any given time. These sites work on desktop as well as mobile browsers, and provide both the latency and throughput measurements. Speedtest also allows you to choose a server location from thousands of locations around the world, thereby giving a more in-depth view of the realities of the network performance.

If you see a throughput which is below the advertised number given by your ISP, you may need to optimize the router settings such as using the 5 GHz band instead of the 2.4 GHz band, or placing the router in a more central location in the building. Alternatively, for desktops and laptops, you could use an ethernet cable to connect to the router thereby bypassing any problems with the wireless completely.

If you consistently see a ping (latency) above 200 ms, you should upgrade your networking devices and/or talk to your ISP. You can also change advanced settings on you router such as maximum transmission unit (MTU) or transmit power but those are beyond the scope of this article.

Summary

Latency and throughput measure distinct but related performance aspects of an Internet connection. For latency, lower is better, and for throughput, higher is better. Some applications rely more on having low latency but do not really care that much about throughput. Other applications require a high throughput but are fairly tolerant of high latency. Finally, some applications require good performance on both.

Latency and throughput are not static numbers, and may vary during the day.

Internet service providers may often advertise the throughput while ignoring the latency, which is an example of dubious marketing.